94% of Americans don't understand privacy risks of AI at work, says NordVPN

By SSN Staff

Updated 1:59 PM CST, Tue January 27, 2026

NEW YORK — Ahead of Data Privacy Day on January 28, cybersecurity company NordVPN has revealed new insights into the privacy risks Americans face from using AI tools in the workplace.

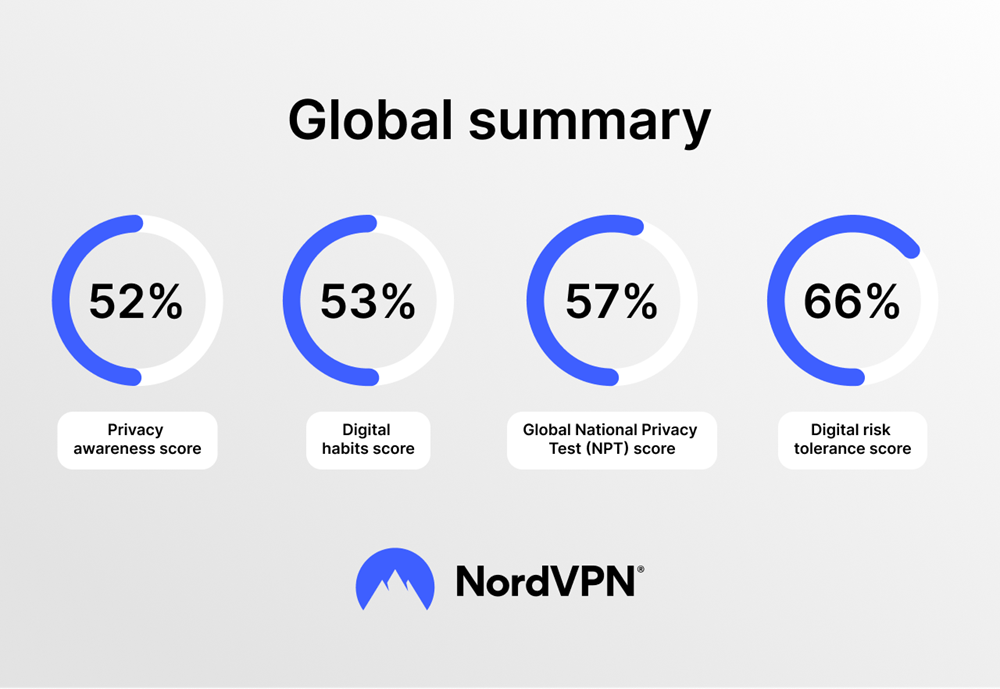

According to data from the National Privacy Test (NPT) for the full year of 2025, 94% of Americans do not understand what privacy issues to consider when using AI for work. In using assistants like ChatGPT, Copilot, and other generative tools NordVPN said they may be unknowingly exposing sensitive personal and company data.

"The rapid adoption of AI in the workplace has outpaced our understanding of its risks. People are typing confidential information into AI tools without realizing where that data goes, how it's stored, or who might have access to it," says Marijus Briedis, chief technology officer at NordVPN. "Unlike a conversation with a colleague, interactions with AI tools can be logged, analyzed, and potentially used to train future models. When employees share client details, internal strategies, or personal information with AI assistants, they may be creating privacy vulnerabilities they never intended."

The risks of AI don’t end at work

Americans are also increasingly becoming targets of AI-powered attacks, as the same technology that boosts workplace productivity is being weaponized by cybercriminals to create scams that are more convincing than ever before.

According to the National Privacy Test, nearly one in four Americans (24%) cannot correctly identify common scams being carried out using AI technology, such as deepfakes and voice cloning. As AI capabilities extend past voice cloning to fabricating entire videos complete with realistic body movements of generated characters that may look like a real person, these scams are becoming increasingly difficult to detect.

The consequences for individuals are already severe. According to previous NordVPN research, 78% of Americans encountered online scams in the past two years, and 20% of them lost money as a result. Nearly half (46%) of US respondents admitted to engaging with an email link that they later realized was part of a cyber scam.

"AI has simplified cybercrime. You no longer need technical expertise to craft a convincing phishing email, clone someone's voice, or build a fake shopping website that looks identical to the real thing," says Briedis. "Scammers use AI to design almost identical replicas of popular retail sites. The barrier to entry for cybercriminals has never been lower."

You can read the full report online at nationalprivacytest.org/report.

Comments